Agents that Reduce Work and Information Overload

Pattie Maes | Commun ACM 1994

In a Nutshell 🥜

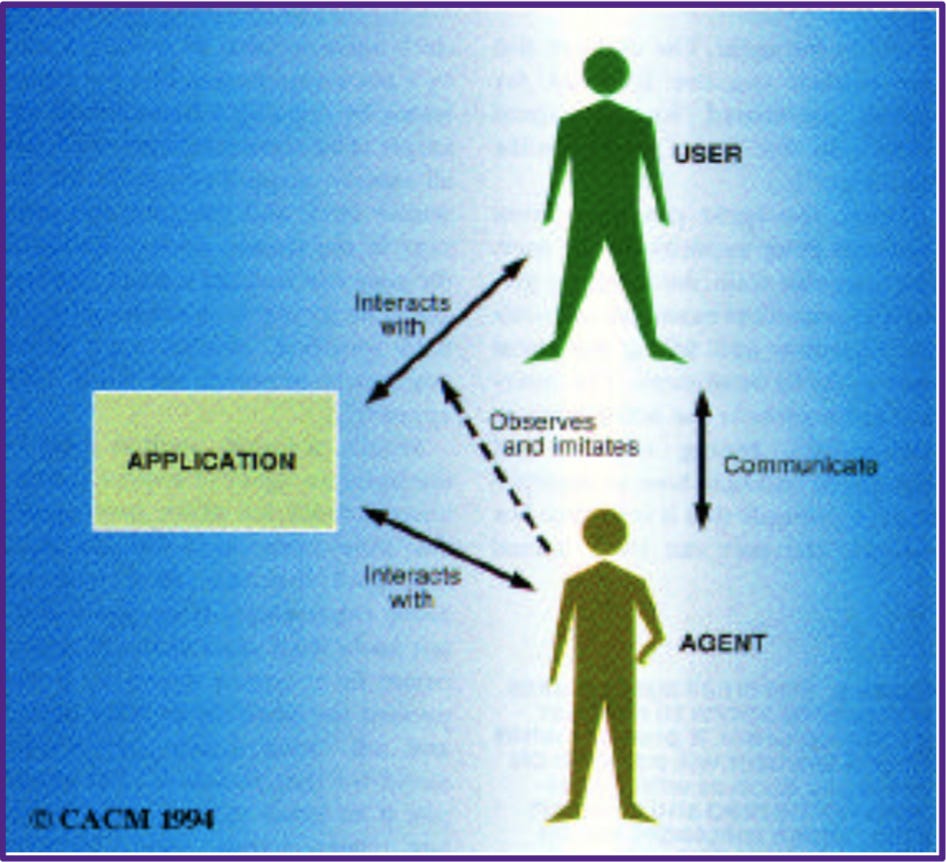

Maes1 advocates the adoption of interface agents, AI-infused computer programs that provide active assistance to users, which contrasts the dominant interaction metaphor of direct manipulation2 at the time. The paper positions interface agents not as the interface between the user and the computer application, but as a personal assistant of the user (that can be bypassed). Interface agents can assist users by performing tasks on their behalf, teaching the user, helping multiple users collaborate, and monitoring events and procedures.

The paper explains two main problems that need to be solved when building software agents:

Competence. The agent requires sufficient knowledge on when, what, and how to help the user.

Trust. The user needs to be comfortable with delegating tasks to the agent.

The paper then explains why other methods (end-user programming and knowledge-based) do not solve these two problems and argues for an alternative approach to building interface agents: learning-based.

A learning-based agent can learn under two conditions:

The application involves the user doing a substantial amount of repetitive behavior. This allows the agent to learn the regularities in the user’s actions.

The repetitive behavior is different for different users. Otherwise, a knowledge-based approach would be sufficient.

Over time, a learning-based agent becomes more competent at identifying the user’s behaviors and the user gradually builds up a model of how the agent makes its decisions and gains trust in the agent.

A learning-based agent can learn from four sources:

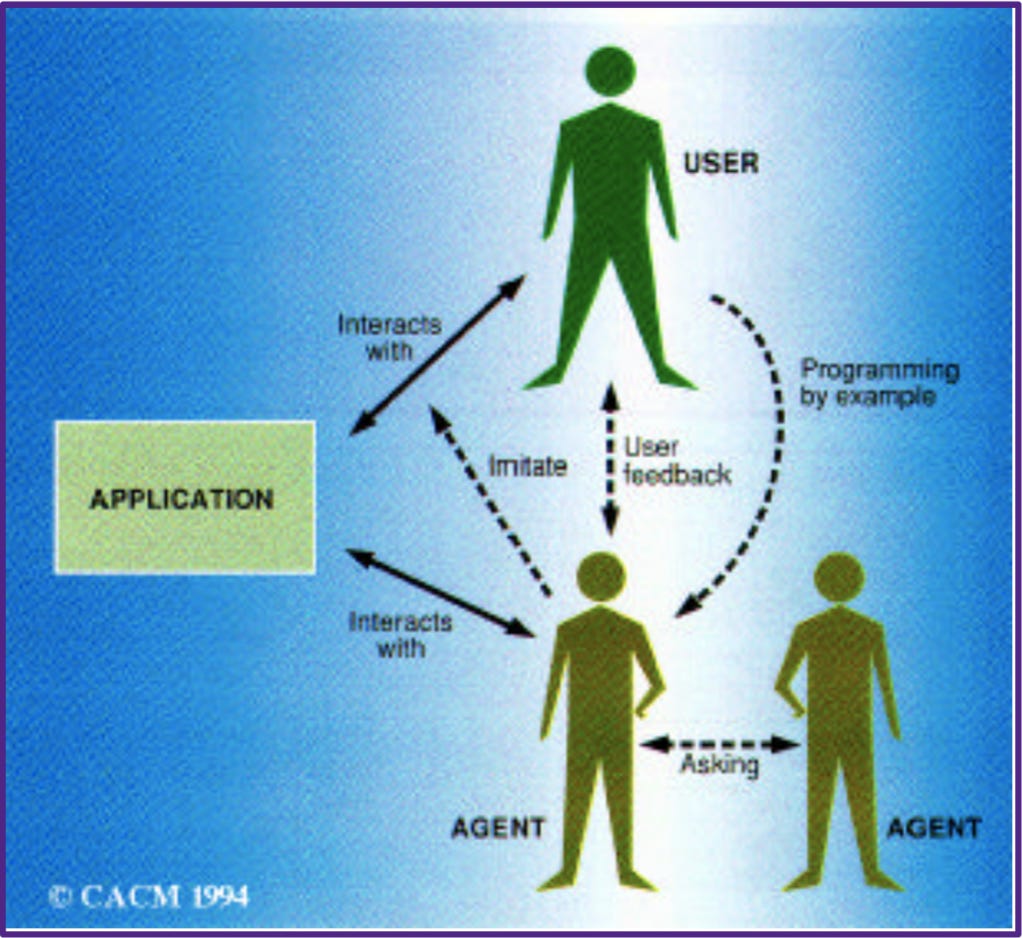

Observing the user. The agent can “look over the user’s shoulder” to recognize recurrent patterns (e.g., always set emails from a particular sender as spam).

Receiving direct or indirect user feedback. The agent can receive direct feedback (e.g., the user marks an article as “like” or “dislike”) or indirect feedback (e.g., the user ignores an article suggestion recommended by the agent).

From explicit examples designed by the user. The user can give hypothetical examples or rules to instruct the agent on how to handle these situations (e.g., always add a new song from a particular artist into the to-listen playlist).

Asking other agents for advice. The agent may ask agents that assist other users with similar tasks for advice. The other agents may have built up more experience than the agent. Advice from other agents can carry different weights (e.g., advice from agents of authority users have higher weights, advice from agents with a good past record of recommending actions that were appreciated by the user have higher weights).

The paper then concludes with some examples of existing learning-based agents the authors have implemented and tested, including agents for electronic mail handling, meeting scheduling, electronic news filtering, and recommendation for books, music, and other entertainment.

Some Thoughts 💭

The paper is very well-articulated and I appreciate the nice overview of the various approaches to building learning-based agents and their two prerequisite conditions.

I also agree with one of the paper’s findings that users should be able to “instruct the agent to forget or disregard some of their behavior”. This is an issue I have with many of today’s recommendation algorithms.

For example, once I click on some recommendation out of curiosity, perhaps due to a clickbait title, I would then be overwhelmed by the algorithm recommending a ton of these similar clickbait videos. This seems to be an especially salient problem in new accounts due to the cold start problem.

Maes, P. (1995). Agents that reduce work and information overload. In Readings in human–computer interaction (pp. 811-821). Morgan Kaufmann.

Shneiderman, B. (1993). 1.1 direct manipulation: a step beyond programming languages. Sparks of innovation in human-computer interaction, 17, 1993.